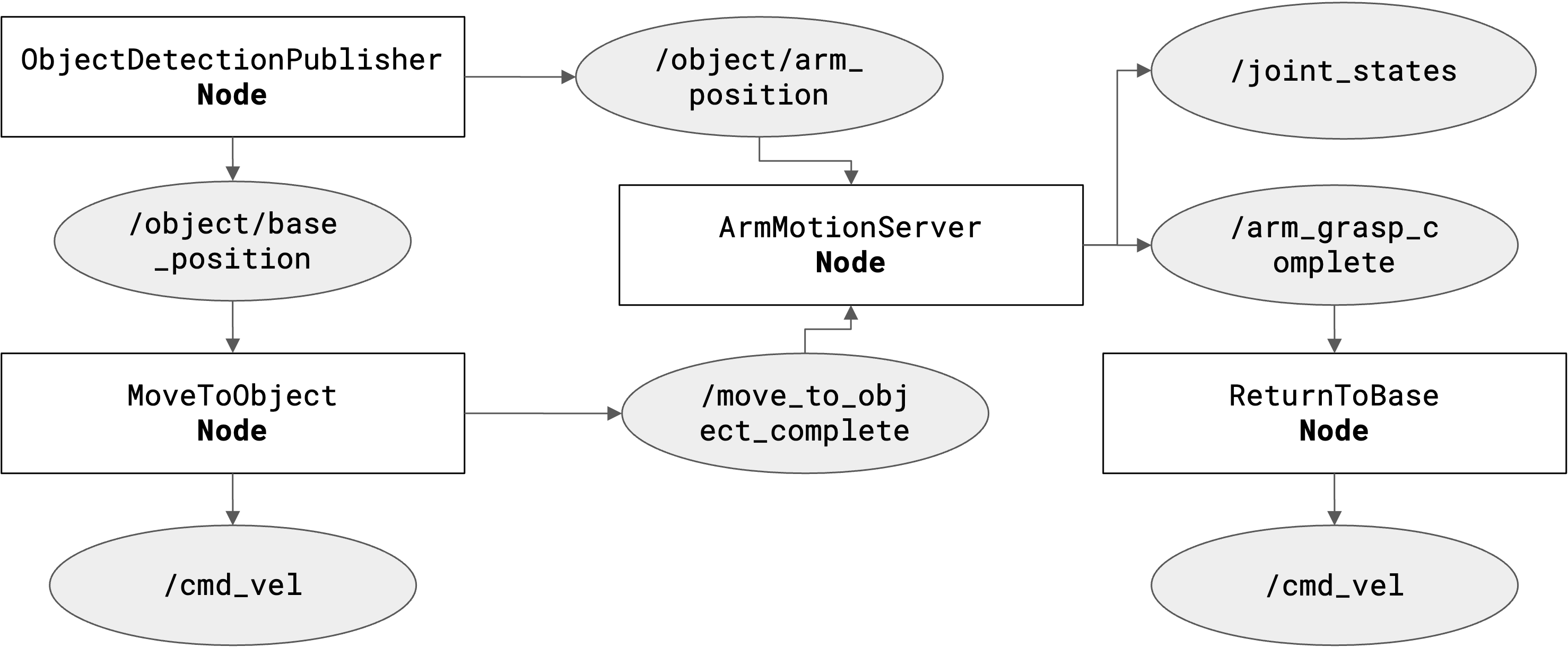

Motion & Task Planning

Autonomous behavior requires robust planning across multiple domains. We implemented Bézier Curves for smooth navigation, a Multi-Stage Trajectory for the arm to ensure collision-free grasping, and a rigid TF2 Coordinate Architecture to maintain spatial awareness across all robot links.